With the advent of ChatGPT and other text and image generation tools, generative artificial intelligence (AI) solutions offer truly revolutionary prospects for your business. Generative AI solutions will have more impact on our businesses and ways of working than the arrival of the Internet.

In this presentation, Olivier shows you how to leverage these technologies right now to go beyond simple question-and-answer scenarios and achieve your development goals while accelerating your capabilities.

Through concrete examples and in-depth analysis, Olivier explores the various applications of generative AI, as well as the limitations and challenges associated with these tools, which, it must be reiterated, are still in their infancy.

Olivier also addresses the ethical considerations related to the use of generative AI tools and proposes ways to ensure the quality of solutions delivered using generative AI.

Series on generative artificial intelligence

This article is part of a series we have produced to help businesses better understand generative AI and its possibilities.

- Automated Generation of Metadata: Solutions for SAP, Oracle, and Microsoft Dynamics Inventories

- The Essential Use Cases of Generative Artificial Intelligence

- Why you should use ChatGPT in a business context

Conference on demand (French)

In-Depth Verbatim Conference Translation from French

[This article is a verbatim transcription translated from French of the conference presented by Olivier Blais :

‘How to Harness Generative Artificial Intelligence Solutions in Business Without Drift’.

It is worth noting that this transcription and translation were done using artificial intelligence tools. A job that would have taken 3 to 5 hours was completed in just a few minutes.]

Introduction

Olivier – Speaker

Thank you very much for your time. It’s greatly appreciated. I know we’re all busy here on a Thursday morning. We’re anxious to get back to the office, but at the same time, I think generative AI has something special. I think it taps into the imagination. I can understand why you’re here. I’m also super excited to talk about this topic. I want to know, has everyone arrived? The absentees are missing out, so let’s get started. Before we begin the presentation, I had a little survey for you. By a show of hands, I was wondering who in the room has certain concerns about generative AI? I see hands going up very quickly. Perfect. Thank you. Also, I had a question about the capabilities of generative AI. Who is excited about generative AI? Who wants to use it? Excellent. We’ll send a sales representative to talk to you shortly. No, that’s a joke. It’s a joke, but not really. I’m glad to have seen both worried and excited hands. In fact, for me, there’s still a duality between the two.

The duality of generative AI

[01min 24s]

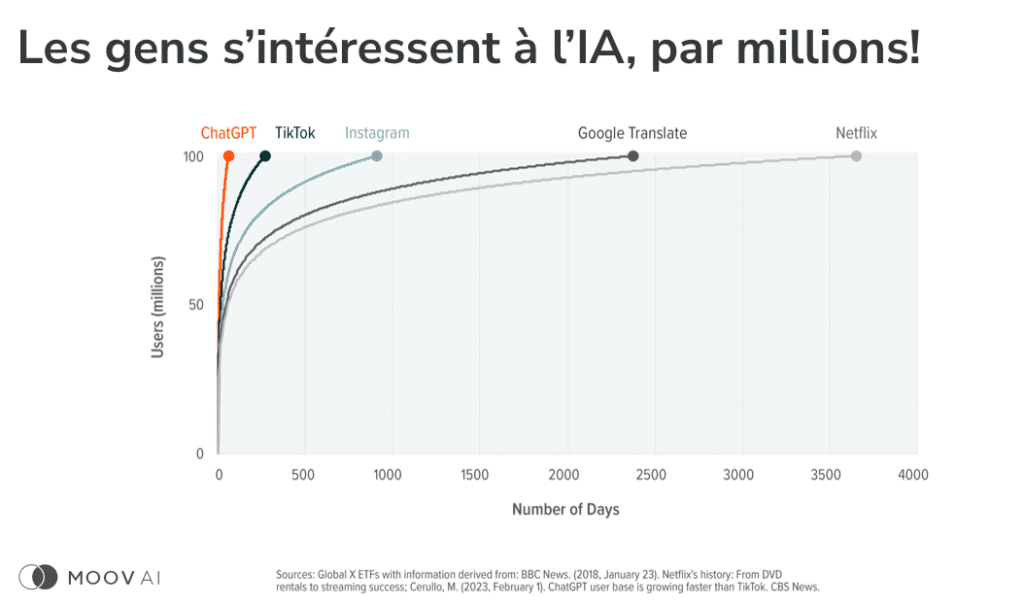

I am confident but cautious about the technology. That’s what we’re going to talk about today. That’s why we’re discussing generative AI, but without going too far. How to effectively use these technologies to generate benefits without excessive risk. What I’m going to do is… Excuse me, first, I’m going to introduce myself. My name is Olivier Blais, co-founder of Moov AI. I am in charge of innovation for the company and a speaker. I speak from time to time; I like listening to myself speak. What we’re going to do is talk about generative AI, of course. We’ll start from the beginning. We’ll introduce the topic, but we’ll go further. Why? Because we are all AI developers at the moment. It’s special, but with generative AI, it’s a paradigm shift. I’m no longer just speaking to a couple of mathematicians who studied artificial intelligence. I’m speaking to Mr. and Mrs. Everyone because now everyone has the opportunity to use these technologies to generate results. So, everyone needs to be aware, everyone needs to understand how to effectively exploit the technology.

The Hype Cycle of Generative AI

[02min 38s]

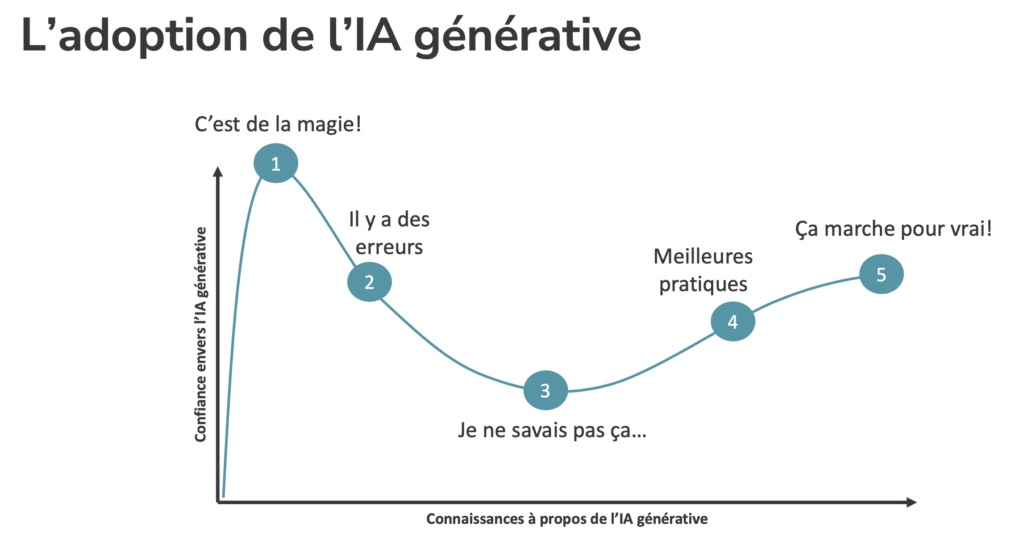

But don’t worry, I’ll try to keep it fairly soft. We won’t dive into mathematics, I promise you. And I’ll also talk about responsible AI, which is key. Making sure that when we do things, we do them correctly. So, we’ll delve into a slightly more theoretical period, but first, I’m curious to know more about where you are in the hype cycle. It’s extremely hyped right now, ChatGPT, it’s all you hear about. I don’t even go on LinkedIn anymore because that’s all it is now. GPT this, GPT that. But in fact, it’s really a curve. When I saw this curve for the first time, it stuck with me. And everyone here, whether you realize it or not, you’re at one of these stages of the curve. I won’t ask everyone where they are, that would be really complex, but I find it interesting to know what the upcoming stages are in our journey. For me, initially, it was around January, I would say. At Moov AI, we started using GPT-2, GPT-3 since 2019.

[03min 56s]

We found ourselves making a documentary, for example, with Matthieu Dugal where we generated a conversational agent. It’s been a while, but since we heard about ChatGPT, that’s when it really awakened people and created hype. At first, we think it’s magic. You input something like “give me a poem about haystacks,” and it generates a poem about haystacks, and it’s incredible. It really feels like magic, but at some point, when you start using it for something useful, that’s when you fall into small “rabbit holes,” that’s when you encounter irregularities. For example, you might question it about a person, a public figure, and then it gets everything wrong. You might use it for calculations, and it can make mistakes in calculations. You scratch your head and think, “Okay, what’s happening?” And increasingly, you find yourself identifying weaknesses in these models. Ultimately, it’s not necessarily magic.

Risks and Limitations of Generative AI

[05min]

It’s a lot on the surface. However, there are some issues to consider with ChatGPT. Firstly, it’s not updated daily. The last update was in January 2021. This means that if you ask questions about current news or events, it may not be aware of them. So, we can identify this as a weakness. Additionally, when using ChatGPT, your data is transmitted to OpenAI, creating a potential security vulnerability. This reveals the weaknesses in these otherwise cool tools. While there are ways to mitigate these risks, it’s essential to be aware of and understand how to properly leverage the technology, using best practices and learning from examples.

Advantages and Best Practices of Generative AI

[06min 12s]

Despite these challenges, it’s fascinating to see what can be achieved with generative AI. While we acknowledge the problems, we strive to overcome them and mitigate the identified risks. Many people are currently using generative AI in functional, deployed applications. It works. And now, we can move forward and apply the same principles to real-world use cases. Speaking of applications, let’s take a step back. Our focus is not just on ChatGPT; it’s one solution among many. For instance, Google has its own solution. I refer to this as B2C (Business-to-Consumer) – something that is accessible to everyone, a democratized technology meant for widespread utilization.

Generative AI Tools for Businesses

[07min 34s]

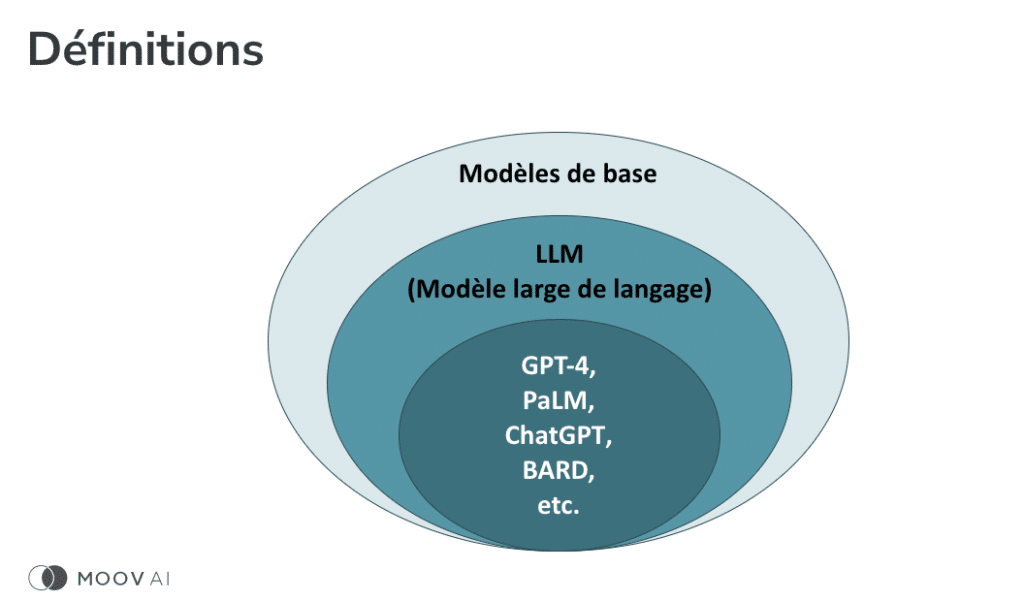

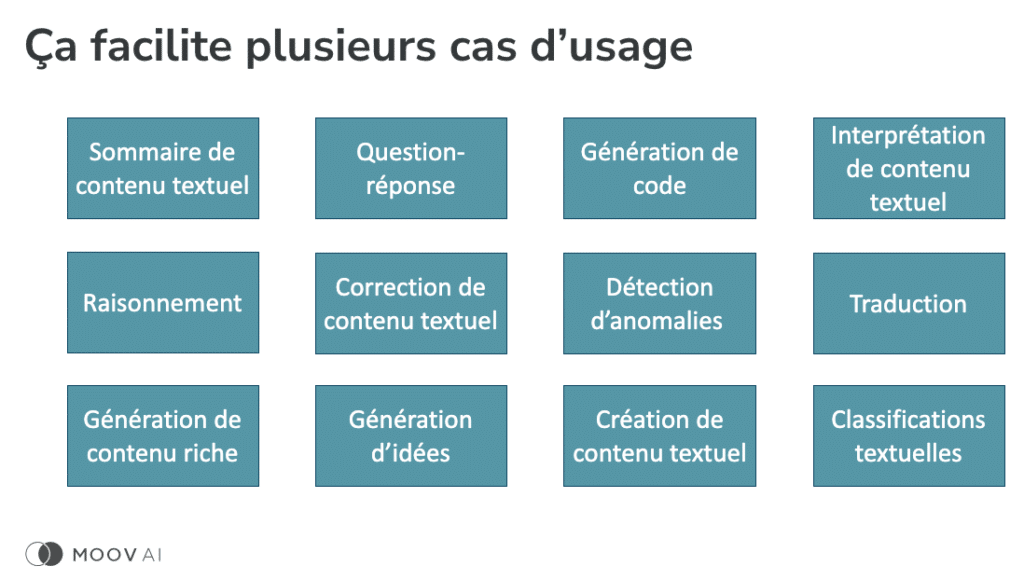

It’s exciting because generative AI enables various text analysis and offers numerous use cases that benefit everyone. There are also tools available specifically for businesses (B2B). It’s important to understand the distinction between the two. We don’t recommend using consumer-oriented tools for enterprise purposes. Instead, we suggest utilizing tools tailored for businesses. For example, solutions like GPT4, Palm, ChatGPT, and BART are just a few examples among hundreds. We refer to these solutions as Large Language Models (LLM). Some of you may have heard of LLM before, and it’s good to see a few hands raised, indicating familiarity. LLMs are language models trained on scraped internet data from various sources, making them highly proficient in understanding and generating text.

Fundamental Models and Language Models

[08min 55s]

This is great because it allows us to do everything we currently do with the technologies we use in our daily lives. However, it fundamentally stems from an approach called foundational models. This approach has been around for over ten years. It’s the ability to model the world. It may sound poetic, “I’m modeling the world,” but that’s essentially what it is. It means that there are human functions that we don’t need to recreate every time, such as image detection. We could create our own model to identify cats from dogs, another model to recognize numbers on a check, and yet another model to identify intruders on a security camera. We could develop them from scratch, but it would require millions of images. Instead, the concept of foundational models emerged, and we thought, “Wait, why don’t we invest more time upfront?” We can develop a model that excels at identifying elements in an image.

The Paradigm Shift with Generative AI

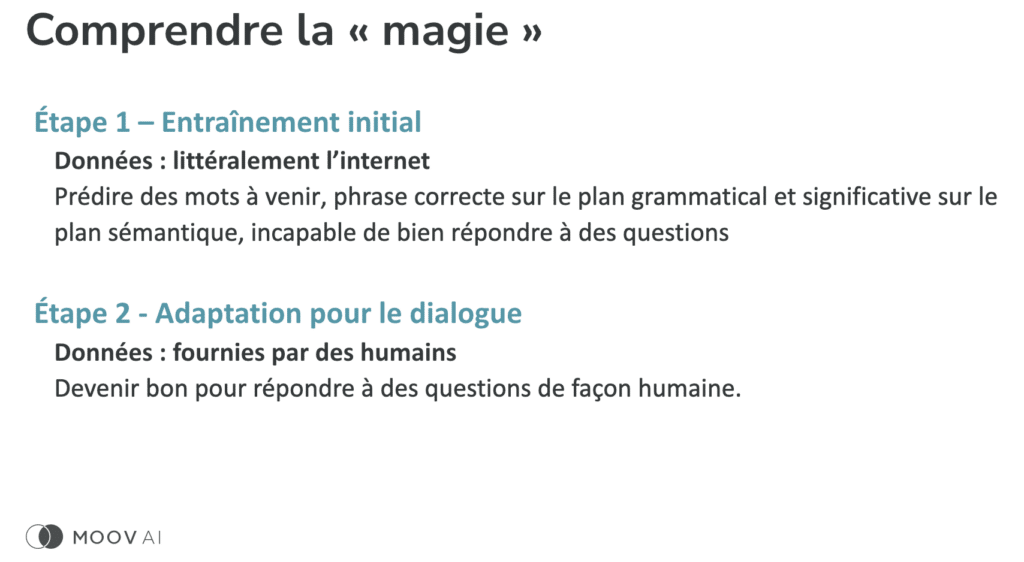

Afterward, we can leverage this capability for tasks related to image detection. It started with image detection, but we quickly realized the benefits in language as well. That’s why we now have generative AI tools that allow us to generate poems, summaries, and perform many other tasks. How were these tools created? They were developed by gathering vast amounts of publicly available internet data. Personally, I don’t have the capacity to do this, but major web players, including the GAFAM companies, can seize this opportunity because they have the storage capacity and advanced capabilities that surpass other organizations. As a result, they have developed highly effective models that understand the world but lack depth. Has anyone here used GPT-3, for example? OGs, I like it—people who were there before ChatGPT. With GPT-3, if you asked it a question, it would essentially provide you with what Wikipedia would say. It wasn’t very interesting from a user experience perspective. This is what sets the new solutions apart. As a second step, there has been an emphasis on dialogue adaptation. It’s fun how we are now getting responses. Organizations have started prioritizing the user experience. This speaks volumes about being more attuned to what users want and how they want to leverage the technology. It makes the experience more enjoyable and motivates people to use and explore it further. Additionally, it has significantly improved the quality because humans prefer to be responded to in a human-like manner. This has increased our adoption of the technology. And now, here we are. Let me explain the difference a bit because it changes many paradigms compared to regular AI solutions. With a regular AI solution, we typically have teams that gather data and train models. Often, each problem has its own model.

[13min 04s]

And after that, once they’ve trained it, then it remains to be deployed so that it can be reused whenever there are new updates or new things that come up. Let me give you an example. Back then, because it’s different now, there was a team at Google, for instance, that focused on sentiment analysis. They would analyze tweets or texts and determine whether the sentiment expressed was positive or negative. So, you had a team solely dedicated to that. They would gather information from the web, identify the associated sentiment, train their model, and then deploy it. From then on, every time a new comment came in, they could identify whether it was positive or not. This had to be done for sentiment analysis, and the same process applied to every new thing you wanted to model. But that’s not the case anymore. The paradigm has changed because there are so many possibilities with language models that you no longer need…

The Possibilities of Generative AI

[14min 20s]

Firstly, it’s already deployed. So, for the average person, for businesses, you no longer need to go through the initial training phase. And that changes the game because it significantly reduces the scope of your project. It’s no longer a project that costs millions to produce because you’ve cut a huge portion of your development costs. Moreover, you don’t need to gather extremely precise data to address the specific problems your model was trained on. Here, you input a question or a prompt, and if your prompt is well-crafted, the result is what you expected to receive. These results now have limitless possibilities. You can have text, predictions, code, tables, images—there’s so much you can get. The benefits we can achieve with these new technologies are incredible. However, I appreciate the duality in the comment made by Google’s CEO on 60 Minutes a few weeks ago: “The urgency to deploy it in a beneficial way is harmful if deployed wrongly.”

[15min 44s]

That’s the real duality. There are so many positives that can come from it, but it can be catastrophic if done incorrectly. It’s the CEO of Google who said that, but honestly, it’s the responsibility of each individual to use these technologies appropriately. If we use them correctly, we can minimize the risks because there are risks. Here, firstly, one risk is that it’s used by everyone. I understand that we might have around 60 people here, but there are hundreds of millions of people who have used ChatGPT, for example. So, it’s now being used by almost everyone, and it’s crucial for each person to be aware of their usage because we could end up causing a disaster if we exploit it in the wrong way. I’ll skip that part. Here, I’ll give examples of things we can do because it’s always helpful to be able to… Sorry, I’ll go back here. These are different use cases, don’t worry, I won’t go through all of them, but there are plenty of use cases. Many of them are related to text analysis.

[17min 00s]

We’re able to analyze a lot of texts, perform classification, and identify elements within a text. Additionally, we can also make predictions, even very conventional ones. So, we can reproduce certain machine learning models using LLM tools. By the way, everything we do with text, we can do with code as well. There might be people who… We’re used to writing text in French or English, but for those who are used to writing in Python, for example, or in C++ or C#, it’s even more efficient because it’s explicit. When you write a function, it’s explicit. Your language is implicit, all the sentence structures, what the words mean. So, let’s consider that the sky is the limit in terms of capabilities. As I mentioned earlier, I think we can agree that question/answer tasks are extremely good by default. So, if we want to build a chatbot, let’s ensure that the chatbots we want to develop have the ability to generate responses. We’ve reached that stage now. What we develop should have the ability to generate responses.

[18min 21s]

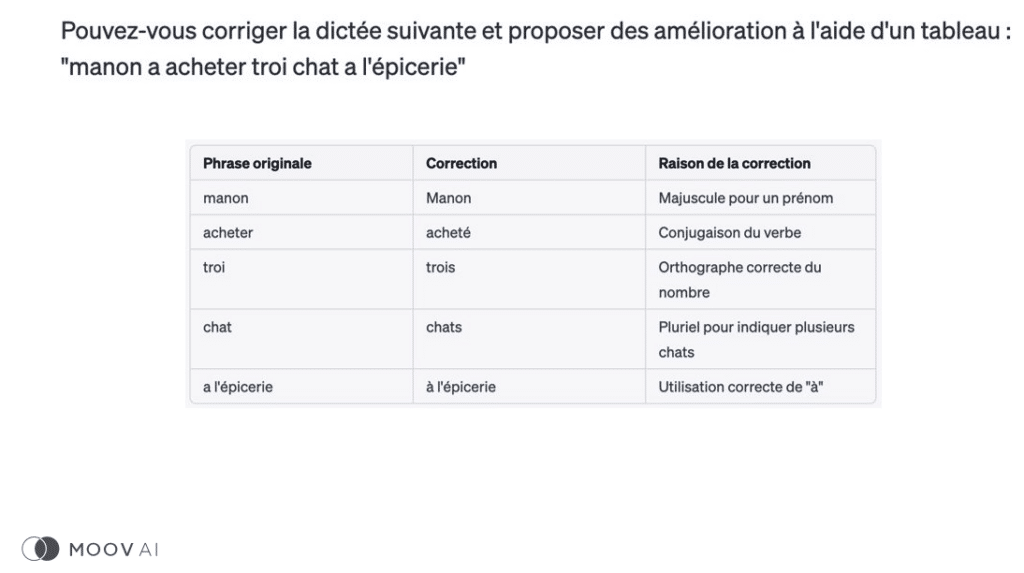

What it allows is the ability to address a much wider range of questions. It also reduces development time. You don’t have to think about each individual scenario separately. You give precise instructions to your chatbot, and most of the time, it will provide appropriate responses. You can also control it, which is a possibility. Another element that I really like is the fact that we can trust these models. It’s not just about saying, “Let’s ask questions and see what happens.” We can trust these solutions in certain cases. For example, here we could correct dictations with solutions. For instance, I posed a question here. I tried a little dictation of my own: “Manon bought three cats at the grocery store.” What we can see is the correction that is made. Truly, it was able to go much further. It has been demonstrated that for text correction, it’s incredible. It works really well, and we can trust tools like this for sensitive matters such as dictation correction for our children.

[19min 47s]

By the way, something quite amusing is that if I ask the Generative AI platform to correct the sentence “Manon buys three cats at the grocery store,” besides correcting the mistakes, they will say that it’s not really at the grocery store where you buy a cat. That’s interesting to know. Otherwise, I don’t really encounter the cat. It’s not necessarily the cat that you want to keep for years. That’s another example. Earlier, I mentioned it when talking about sentiment analysis. But these are things we can do. All the existing APIs will be rapidly replaced. Here, I asked the same question: can you identify the sentiment in the following sentences? You provide the list of sentences, and they return the correct sentiment. That’s exactly the tool that will be used in the upcoming APIs. Once again, we can trust the tools as long as they are used appropriately and optimized for the task you want to accomplish. I even pushed the envelope a bit because earlier, I talked a lot about the risk. Yes, there is a risk, but it’s important to understand the risk properly.

[21min 05s]

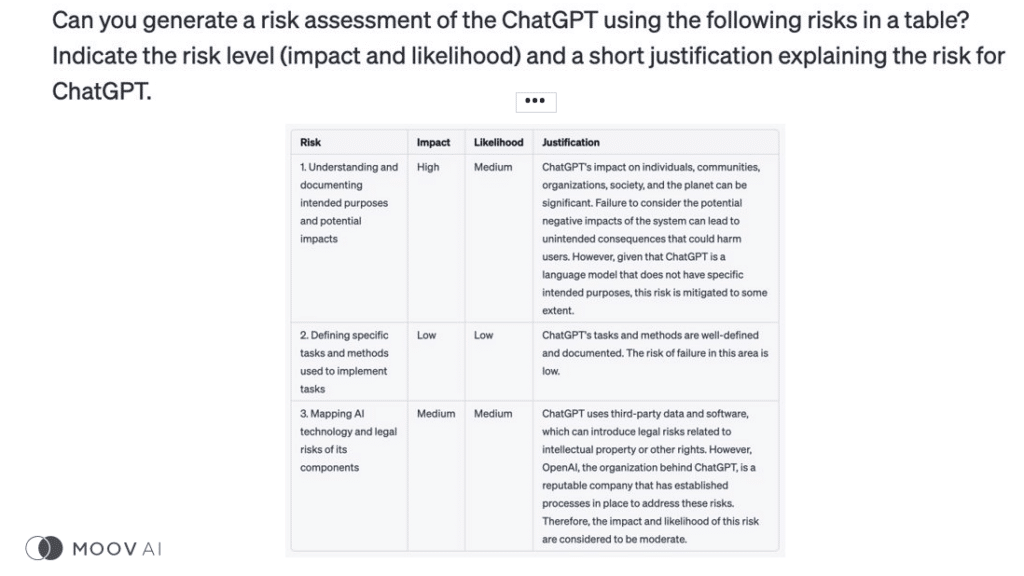

I thought, “Wouldn’t it be fun to use Generative AI tools to perform risk analysis of an AI solution?” I thought, “It would be amusing to ask ChatGPT to analyze the risk of ChatGPT itself. Let’s see if I can put it to the test.” I actually received some very interesting responses. First of all, it did a great job. I used a risk framework called NIST, which is highly recognized. I asked the question, “Here are the risks, can you assess the impact, the probability of their occurrence, and even provide justifications?” The task was really well done, and I am extremely satisfied. Here, I’ll give you three examples. The first example is about use cases. Is the use case a risky one? What’s interesting is that it’s not always straightforward, I think we know that, but it’s not due to hallucination or error; it’s a matter of perspective. So, the response I received was, “No, ChatGPT is designed for all use cases.

[22min 19s]

Since we don’t specifically target any use case, we have nothing to blame ourselves for.” I’m exaggerating, but I think it’s interesting because it gives us the impression that we try to avoid as a society. It’s like saying, “You know what? We’ll give the correct answer to everything, and then we can take a step back, and we’re not responsible for what happens.” Our responsibility is to prevent that from happening. It’s like saying, “No, no, look, for each use case, for each scenario we use, we’ll make sure it’s done properly.” So, we’ll be required… There’s an additional level. We can’t just rely on technologies to control each of these cases. It needs to be done in a subsequent step. Another aspect was about the methods for manufacturing, for creating the solution. And here, it made me think a lot. So, OpenAI creates its own model with two or three data scientists. I wonder who has the best capabilities for solution creation.

[23min 27s]

I think I’ll put a lot of my money on these platforms because they have highly qualified people, they have large teams. So, it made me think, and I agree with them. It’s true that the risk is lower. I think the risk is higher if Mr. or Mrs. Average Joe tries to create their own model because they may not have the best methodologies, they may not have the best expertise, and so on. So, there is also a significant benefit that can be gained with these solutions. Lastly, the last point I wanted to discuss is a risk in terms of legal security. And don’t worry, I’ll come back to it later. But something interesting, I got it here. What it told me is that ChatGPT uses third-party data, and that entails risks related to copyright and intellectual property. But all of this is intriguing. Firstly, transparency, being able to understand the model a bit more, but also that generative AI solutions are capable of performing tasks as critical as model risk assessment.

[24min 42s]

The guy who is involved with ISO standards is very happy with this type of exercise. Now, I’ll take an even bigger step back. I don’t know if you all agree to use generative AI. I think yes, I saw many hands raised, but some may not be. But I have some bad news for those who are less enthusiastic about using generative AI. You don’t have a choice. Unfortunately or fortunately. Why is that? It’s because technology organizations… Here’s an example from Google, but Microsoft has a very similar roadmap as well. They didn’t just create generative AI tools for everyone to have fun writing poems or creating summaries. They also used them to improve the services they offer to their clients. For example, here are three different levels of generative AI offerings by Google. The clearest one is to say, “I’ll help data scientists and people developing AI solutions to develop generative AI solutions.” I think that’s a given.

[26min 06s]

Everyone is aware that this was coming. But Google takes it a step further and says, “Wait a minute, you don’t always have to have data scientists. There aren’t many people developing AI solutions, but there are many more developers in the world. So, how can we assist developers in their development?” And that’s where the capabilities we’re starting to understand now when using existing solutions come into play. There’s the possibility of using the solutions as they are. For example, Google talks about helping with conversation, helping with search. These are very clear use cases that developers can deploy in existing solutions. They can develop their own applications, select the features they need, and adapt and adjust these features to enhance the end-user experience. And we can go even further than that. So, it’s not just for the average person; there are also business users who have started using tools like Dialogflow.

[27min 22s]

Google has several tools, and these tools will also have generative AI capabilities. What this means is that generative AI is here to stay. We just need to be able to use it effectively. And I have some more news for you: development continues to accelerate. I understand that some people may be happy to know that there are some lingering questions. Apparently, GPT-5 is not being developed, but that doesn’t change the fact that development is ongoing and intensifying. I can provide examples. We have LLaMa, and I’m not talking about the animal, even though there’s a picture of a very technological llama. But LLaMa is a tool that allows you to create your own models, your own internal ChatGPT, for example. So, that continues. We can see the frenzy. Everyone wants a LLaMa. I’m exaggerating. Personally, I would suggest not using that and instead using the right technologies. That’s my take on it. We have much better performance with the existing solutions that have already been tested by millions of people.

[28min 51s]

But I understand that it’s something interesting. It allows us to develop everything on our own laptop. Sure, the geek in me finds it exciting, but the business person finds it a bit overkill for what we’re creating. There are also companies that have their own capabilities in development. For example, Coveo. Coveo is very clear that they have already developed some generative capabilities. Coveo, which is one of Quebec’s gems. And there are other companies like Databricks, a major player in the ecosystem, that is developing Dolly, I believe. So, it will intensify. There will be more and more competition in the market. And there’s also a trend, I’ll just briefly mention it, called “auto GPT,” which is the ability to train GPT, a generative solution, with another generative solution, creating a loop. It’s scary, I agree. Again, it’s important to control it, but for now, it’s a trend that is more prevalent in development, automating certain workflows.

[30min 09s]

Really, it continues. It’s important to stay informed. It’s important to understand what is happening to ensure we use the best technologies to meet our needs. And to avoid risks, I’m going to talk about three different challenges we have currently. In terms of hallucinations, I’ve been talking about hallucination for a while. What is an hallucination? I’m not talking about hallucination in a desert. An hallucination is an error. Let me give you an example. Everyone has a brother-in-law who says things, he’s so convincing, but sometimes he doesn’t know what he’s talking about. I think everyone has had that brother-in-law or sister-in-law. That’s an hallucination. It’s an error. In the past, the OGs will remember that in a traditional model, you have errors, so sometimes you make incorrect predictions. In this case, it’s a wrong prediction, but it’s so convincing because it’s well-written. An hallucination is a bit more problematic because people who don’t necessarily have the ability to judge the output accurately could be deceived. So, here, you need to be careful every time you produce an output, every time you make a prompt, you need to look at what the result is.

[31min 43s]

Deepfakes, fake news, there are plenty of them, and there will be more and more. So, the ability to ask, “Write me a text about a certain topic,” without fact-checking and posting it on Facebook, is a problem. Why? Hallucination. I think we’re making the connection a bit. It’s much easier to write beautiful texts with false information than it used to be because before, you did it yourself or had it done by people in other countries. But now, it’s much easier. So, we need to ensure that every time we develop things, especially when it’s automated, we avoid the spread of fake news and deepfakes. And finally, in terms of privacy, I think everyone, if people are not aware of it now, I think we will be more and more aware of it. Let’s focus on it because it’s one of the problems we will increasingly see. There are horror stories right now, people copying, pasting trade secrets, putting them into tools. Ultimately, the information is distributed to big companies.

[32min 52s]

And there, you have just created significant security vulnerabilities. But that’s why at Moov AI, our stance is somewhat similar to what I mentioned earlier – it’s cautious optimism. In fact, I’ll use the quote from Uncle Ben, for those who remember Spiderman, “With great power comes great responsibility.” That’s why we have decided to embark on this journey. We want to assist our clients because if we don’t, people will do it themselves, and they might do it poorly, promoting fake news and causing more problems than benefits. That’s why it’s important to provide guidance and support. That’s why we are actively involved, for example, in advocating for Canada’s Data and Artificial Intelligence Act, Bill C-27. We are helping to accelerate these efforts. We also have a prominent role in ISO standards to regulate and oversee the development and use of artificial intelligence. Our goal is not only to develop useful things but also to ensure proper control over them.

[34min 13s]

This can be leveraged in three different ways. Firstly, in the field of education. For instance, we have Delphine here, who oversees the Moov AI Academy. We will ensure that we assist individuals in achieving their objectives. That’s for certain. We will also contribute to the development of high-quality solutions. We have already begun doing so by employing machine learning methodologies to demonstrate the effectiveness of our solutions. If we can do it for traditional solutions and prove their worth before deploying them, we can do the same for generative AI solutions. Lastly, we aim to fully comprehend the risks associated with the solutions we undertake. Our objective is not to create more problems but to capitalize on opportunities. Now, let’s briefly discuss risks because it is crucial to address them. I brought you here for that purpose. Just kidding! However, it is essential to have a good understanding of risks. Here, I will discuss four main risks: functional risks. When you build a feature, ultimately, a model is a feature.

[35min 33s]

Contrary to what ChatGPT was saying, we are not merely creating a platform that provides answers to everything. Our goal is to develop features that meet your specific needs. How can we do this effectively? From a societal standpoint, how can we ensure that we create a solution that is fair and ethical? The key is to ask the right questions. We also need to consider information security and legal aspects. Now, let’s go through the different risks. When it comes to best practices for functional risk, it is important to define the task you want to accomplish clearly. We have examined various tasks extensively. Therefore, it is crucial to break down the problem in the way we want to approach it. Just because we have a powerful tool at our disposal and can input any prompt doesn’t mean it will provide optimal responses for all scenarios. We shouldn’t overlook scoping and settle for just having a search bar where we can do anything. Ideally, we should ensure that we achieve good performance relative to our specific goals. That’s truly the foundation, and I highly recommend everyone to follow this approach.

Best Practices for Functional Risks

[36min 53s]

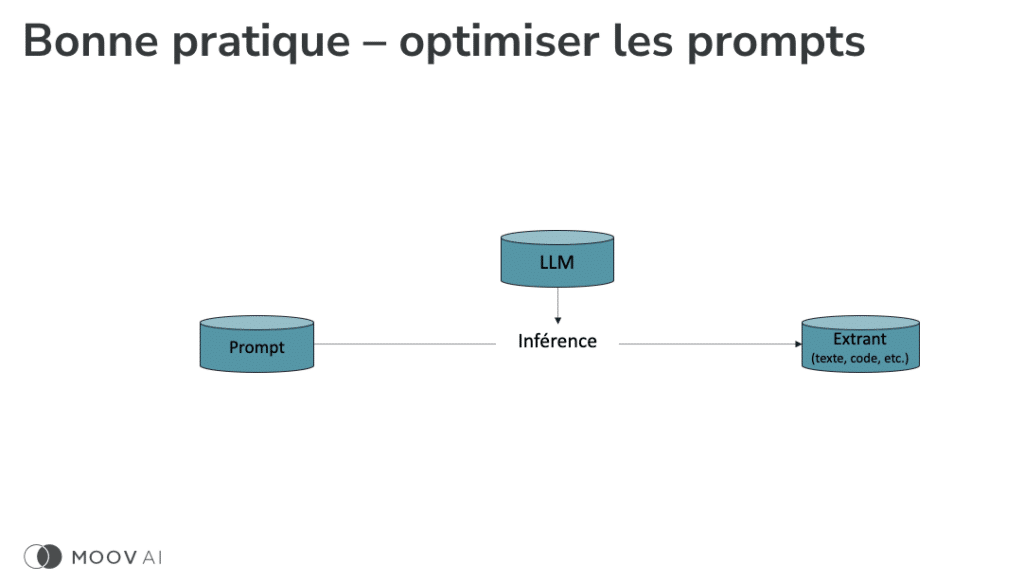

Next, what we want to do is optimize our approaches and prompts. I will show you how to do that shortly. And finally, you need to perform validation. It’s a machine learning tool, an artificial intelligence tool. You want to validate it with multiple data points, prompts, and scenarios, just like we do in traditional artificial intelligence. Just because it works once or twice doesn’t mean we can assume it always works. So, one of my recommendations is to use conventional approaches, approaches that have been proven for validation, and validate what we develop. “Okay, yes, it works.” And this is quantifiable. “Okay. What I wanted to develop works well 90% of the time.” So, you’re able to quantify the percentage of correct answers you obtain. This is highly valuable because it allows you to determine whether you’re shooting yourself in the foot or not by continuing the development. Now, let me give you an example regarding prompt optimization. What I mentioned earlier, and it’s really… I think everyone understands that it’s quite simplistic, is that writing a prompt like “Write me a poem about haystacks” isn’t what you’re going to transform into a process or a product.

[38min 29s]

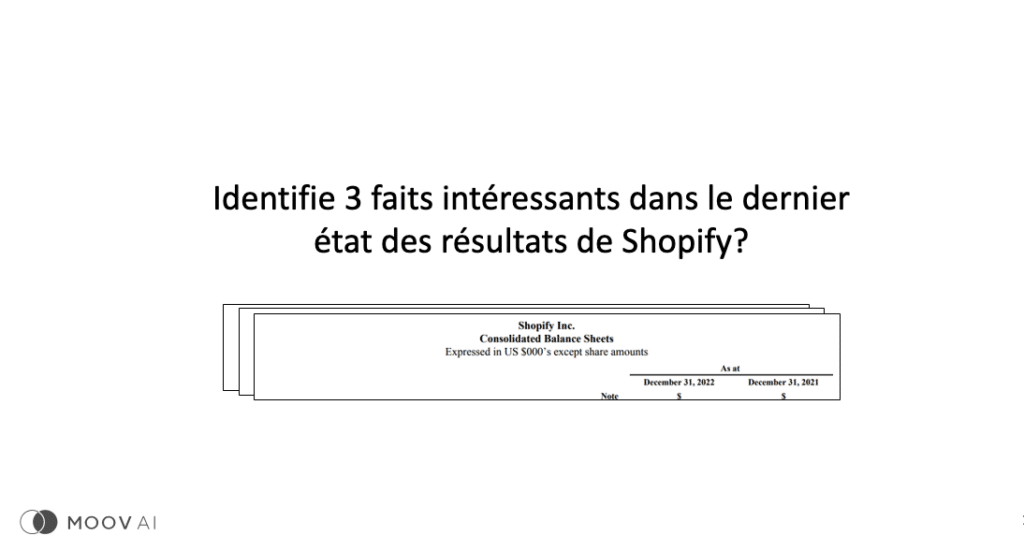

“It’s not about that. It won’t work well. I like to use the expression ‘future-proof.’ It’s not something that will allow you to deploy a solution that will work in the long term. Instead, what you’ll want to do is… Yes, let me give an example. I apologize. I’ll give an example. It’s like if I ask a question to a financial chatbot that I develop, such as ‘Identify three interesting facts in Shopify’s latest income statement.’ Is that a legitimate question? No, excuse me, ChatGPT is only trained until 2021. Okay, but it doesn’t know that. And if you ask it a question about, for example, the financial statements of 2019, it will give you random answers. The numbers won’t be accurate. Why? Because it’s really far back in the tool’s memory. Instead, what you want to do, and the best way to avoid shooting yourself in the foot, is to provide relevant information to the model. If instead, you manually find the financial statements and copy-paste them to test it.”

[39min 42s]

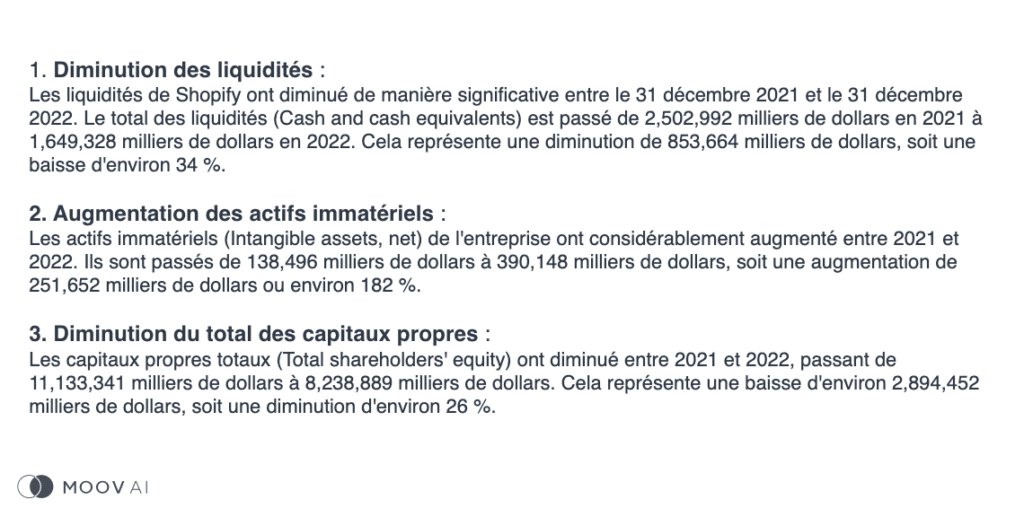

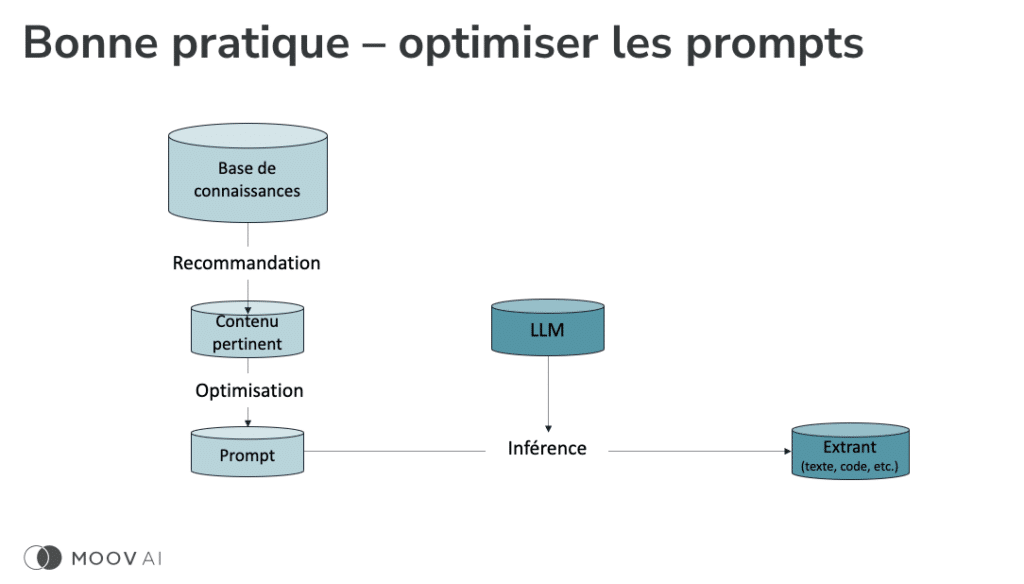

I suggest doing it by the way. You will be pleasantly surprised, but do it programmatically within a solution. And then, you say, “Can you identify three interesting facts in Shopify’s income statements based on this document?” It’s full of numbers, difficult to read even for yourself, but Generative AI tools are capable of interpreting the information. And here, I’ve tested it. Will it give you a response? Yes, it will. And by the way, the numbers have been validated. I didn’t have them validated by Raymond Chabot of Grant Thornton. I’m pushing it a bit, but not that much. But it provides real facts, the right information, and that’s future-proof. So, you’ve just created, yes, admittedly, a little extra complexity, but it’s worth it. Now, if I come back to my proposal, it would be to add information. Firstly, start with a knowledge base. Your knowledge base could be an FAQ, documents related to your company. For example, I want to know about my service offerings.

[40min 59s]

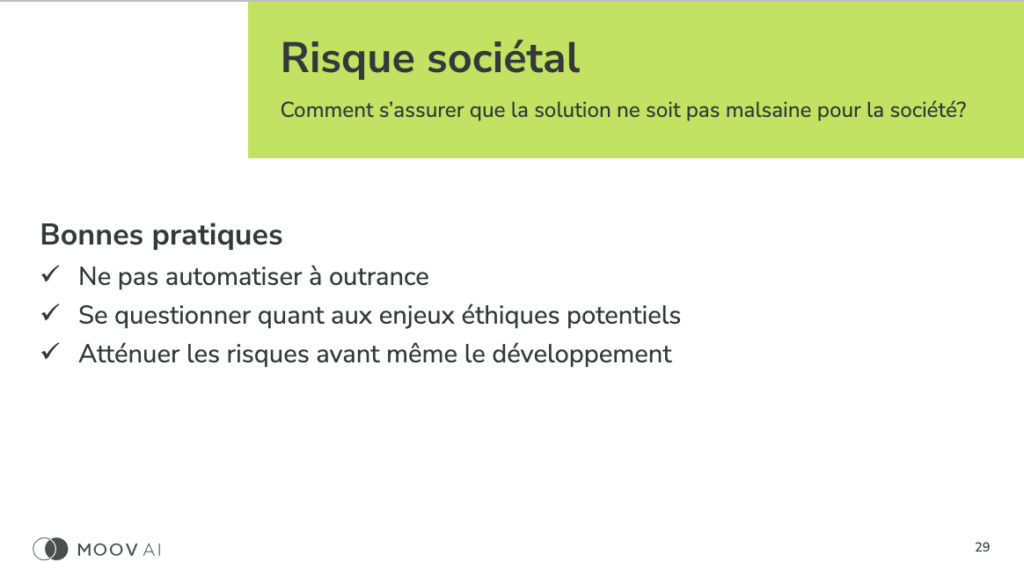

In my projects, I have post-mortems, I put them in a database, and after that, I can ask a generative AI tool questions, and it responds to me. You create a knowledge base, and then you go on to create, essentially, a recommendation tool, so you create a simple search tool that gives you recommendations. What information would you like to add to your prompt? Then you optimize it, and there you have a tool that works well, hallucinates less, and is ready for the future. It’s this type of tool that I propose to use because otherwise, we shoot ourselves in the foot. In terms of societal risks, we need to ask the right questions. I mean, quickly, we need to ask the right questions. If you don’t ask the right questions, please don’t automate anything. Automation, when you don’t know what you’re doing, is the enemy. We don’t automate anything before knowing how to ask the right questions. But again, we need to ask the right questions. We don’t know what we don’t know, right?

Societal Risks and Asking the Right Questions

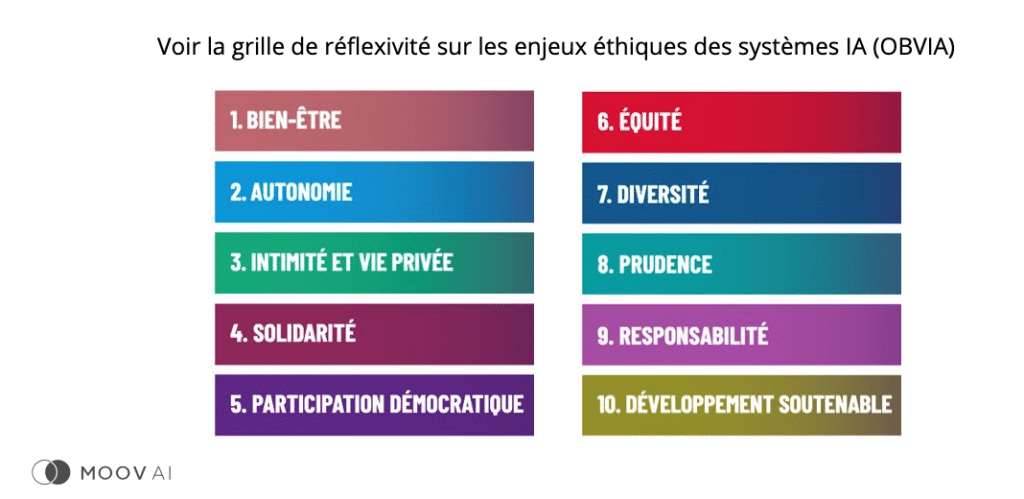

[42min 10s]

There are tools for that. I can share them with you. What I’m going to do is, later on, I’ll share a list of tools with you. One of the tools I like is called the reflexivity grid. I didn’t know it was a word, but apparently, it is. It’s about the ethical issues of AI systems, and it was developed by OBVIA, which is a Quebec organization. And here, for example, we have the ten commandments, the ten subjects, ten categories of risk. It’s really interesting because this grid provides very specific questions. For example… But these are questions that make you think. I’ll give you some examples. Can your system harm the user’s psychological well-being? In some cases, yes. About a month ago, there was a suicide that happened due to abusive use of a chatbot. I know it’s a “cherry-picked” example, but it just shows that there can be a connection between psychological well-being and the use of technology. We just need to be able to understand whether or not our system can affect society.

[43min 31s]

In terms of privacy, of course, we discussed it earlier. In terms of caution, what’s the worst that can happen? Do we have mechanisms to prevent the worst from happening? The worst that can happen is information sharing. If it’s just internal information sharing, but then you always have someone who will validate and correct it, it’s not a big risk. But if you end up creating something that is automatic and it sends false information or makes financial decisions, we recommend having mechanisms to mitigate these risks. In terms of responsibilities, who is ultimately responsible for the solution? We can’t just say, “I rolled something out, I pressed ‘run’.” It runs, it’s supposed to perform a task, but there’s no one in the organization who is in charge. The answer is not “Google is in charge because it’s their solution.” No, no, no. You are in charge of what you develop. So, in other words, if we think we’re going to use ChatGPT to automate a department, I have some news for you.

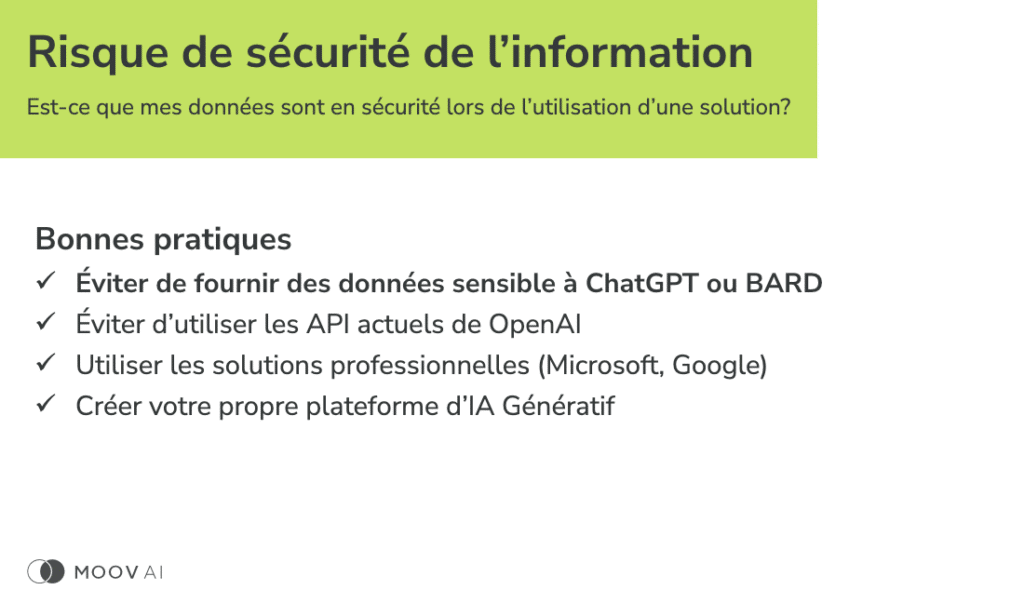

Information Security and API Usage

[44min 44s]

There will be someone in charge of this new virtual department, and all the bad decisions that are made will be attributed to that person. Are we ready as an organization to do that? That’s another good question. I strongly suggest asking yourselves the right questions and trying to answer them as best as possible. That doesn’t mean you will cancel your projects, but you might structure them differently. In terms of information security, what I’m going to propose, as seen in bold, is that first and foremost, starting today, avoid putting sensitive information, whether in Bard or in ChatGPT. These are two solutions where information is passed to the creator. It’s not because of reasons like “We want to steal the information to dominate the world.” It’s to learn more about the usage patterns that are being captured in order to gain insights into the technology’s use. But we should avoid it because it poses a risk to the security of our company’s information. And personally, do not enter your social insurance numbers. There are many things we want to avoid doing.

[46min 05s]

I wonder if everyone knows my name based on my social insurance number. That’s not a good prompt. Also, the other element, that’s for B2C tools that are free. We know there is nothing free in this world. That’s an example. But for now, what I propose is to avoid using OpenAI’s APIs. Currently, OpenAI uses the information for retraining, so the current tools, for cases where information security is more critical, for example, when creating a chatbot where it’s really the end user who communicates. In that case, you cannot control the information that is disclosed. In this case, I would probably use other alternatives, such as using Google, using Microsoft directly. Professional services guarantee that the information will not leave our own environment. That’s much more reassuring. And then, here, I have a small proposal for companies that I’m starting to hear, which is a proposal that people are adopting, is to create their own generative AI platform. If we start seeing it more and more, here, the use case is very simple. If I work at Pratt & Whitney Canada and I start using ChatGPT, copy and paste, I want to validate spare parts, what are the instructions for this material, the spare parts needed for this particular repair?

Legal Risks and Copyright Considerations

[47min 51s]

It’s a good use case, but if you test it, it means you’ve just provided your engine’s technical specifications to OpenAI. You probably don’t want to do that. Instead, what can you do? You can use professional versions. Firstly, you could use professional versions, whether it’s Google or Microsoft, both offer these capabilities, and you create an interface, and that’s it. So, you’ve just created an interface, you can call it whatever you want. Pratgpt, you can have fun with it, add nice colors. You’ve just reduced a risk in using the technology. You’re not preventing it. The worst thing would be to prevent the use of generative AI because it’s impossible to prevent its use. Instead, you control the security around it, and you can even go further. Earlier, I was talking about a knowledge base. With my Pratgpt, I can give it access to all my technical documentation. That way, I can ask questions, and it provides accurate answers. You can create this interface and truly benefit your organization. That’s an example that, as such, solves many problems.

[49min 12s]

I’m a solution-oriented person, which is why I like proposing this solution because it’s so elegant. Ultimately, we’ll end up with legal risks. We talk about it a lot: copyright, plagiarism. Yes, there can be those issues. So, firstly, what I propose is to assess the risk of that happening. There are certain risks like sentiment analysis, where there are none. You’re asking if something is positive or negative based on information. So, there’s no risk there. In some cases, the risk will be zero or negligible, but in some cases, the risk will be very high. As a journalist, if I want help in creating my article, there’s a higher risk of plagiarism, intellectual property issues. If I want to automate code development, I might end up using portions that have a commercial license, which I shouldn’t be able to use. And you don’t know it because the output is so elegant that sometimes you can be caught off guard. In these extreme cases, what I propose is that, precisely in situations where there is a significant risk in terms of copyright, I would suggest avoiding the use of ChatGPT because GPT is one of the solutions currently available that hasn’t clarified that they only use publicly available information.

Alternative Solutions for Copyright Risks

[50min 55s]

So their position is currently unclear. In these cases, it’s not very common, but still, when it happens, there’s GPT 3, there’s GPT 4, there’s PALM, there are several other tools that can be used to address this issue. Additionally, you can approach the problem differently. Instead, you generate something and then have it validated. For example, from a journalistic perspective, there are tools that can check for plagiarism. So, you can add and refine your tool to minimize risks. I hope I haven’t put anyone to sleep talking about risks, but it’s important to me. So thank you for staying. I haven’t seen anyone yawning. I’ll give myself a pat on the back. In conclusion, I’ve said it before, and I’ll say it again. It’s important to get on board now, to embrace the technology. There are so many benefits that can be reaped.

[52min 08s]

With that, thank you very much for listening.

Olivier is co-founder and VP of decision science at Moov AI. He is the editor of the international ISO standard that defines the quality of artificial intelligence systems, where he leads a team of 50 AI professionals from around the world. His cutting-edge AI and machine learning knowledge have led him to implement a data culture in various industries.